How AI impacts my productivity

A Lean analysis of the impact of AI in software development

Lean Engineering has provided me with tools I use daily to approach new topics. And developing with the help of AI is such a topic.

So I want to take a step back and reflect on how AI has changed my approach to software development, where it shines, and where it’s still lacking. In this context, I will focus on the basic usage of ChatGPT4 without plugins to go through the following tasks:

Research & planning

New project setup

Writing API-heavy code

Writing tests

Implementing the function to pass those tests

Debugging

Upgrading an existing feature

Disclaimer: AI helped with the header image and enhanced a couple of phrasings

Lean’s approach to productivity

Graphics are courtesy of the wonderful Tycho Tatitscheff. Who also taught me about those terms. You can follow him on Twitter.

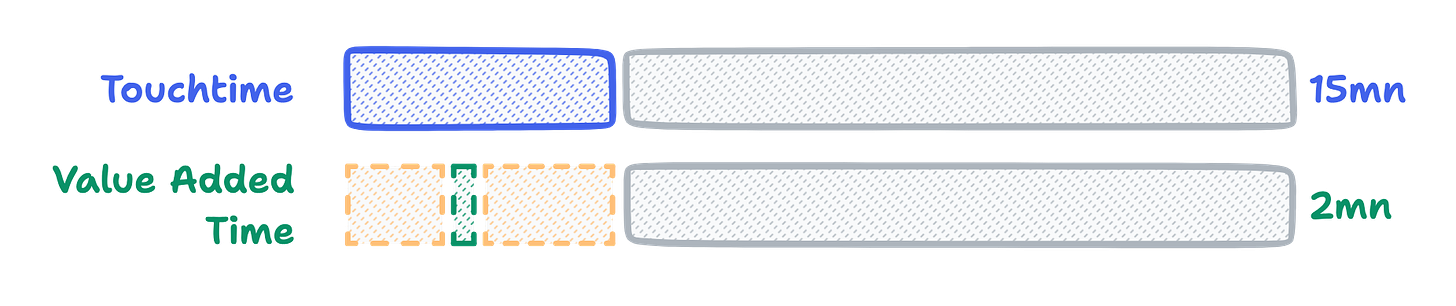

Let’s analyze the development process of a new minor feature in terms of time.

Lead time in this context is the time taken to deliver value, and it’s the elapsed time between the start of development and the moment it reaches production.

Touchtime is the time spent concentrating on the task. I tend to include time spent understanding the business process, testing out tools, and technical planning, on top of the development process itself.

Value-added time is the time spent on producing pure value, and most of it is the time spent coding. And by coding, I mean actively typing code to introduce the feature. You don’t count refactoring, documentation, or reading, … This means that this chart is actually pretty optimistic because, as a matter of fact, value-added time, in this case, would probably be around 20 minutes.

The gray part is off-time, it delays the lead time, but it’s not time spent actively working on this feature.

And what about the yellow parts? It’s waste, pure waste. It’s time spent understanding the business, planning things, waiting for the CI to run, (testing)… Basically, everything you wish you wouldn’t have to do.

As a craftsman and maker, increasing my productivity means reducing my lead time by:

Reducing off-time in between touch-time

Reducing touch-time by:

Reducing the required value-added time

Getting value-added time/touch time ratio as close as possible to 100% by:

Eliminating waste

AI’s impact

Now that we speak the same language, how has ChatGPT4 impacted my productivity? Well, it’s been an insightful investigation. Of course, the honest answer is “It depends”. And for that, I’ll analyze a couple of everyday tasks.

Research and planning

Remember, research and planning are actually waste and should be eliminated as much as possible.

ChatGPT4 has been a massive help for me when dealing with unknown languages or domains.

For example, I’m researching how “extend the memory” of ChatGPT. Asking the following got me started quickly:

I'm trying to "extend the memory" of ChatGPT when using its API, as I'm limited by context size. What are the steps to achieve this programatically?

I then ask for details for each step and an explanation of the relevant terms.

What are the steps once I get a new response to store it? (even better if you can reference a step it provided previously)

What’s a vector database?

Thanks to the responses:

I have a detailed plan of the required steps to achieve my target result

I know the relevant terms to Google instead of guessing

This allowed me to reduce my waste for research and planning by ~80%.

New project setup

The “getting started” task has probably been the one most impacted by my usage of AI. While I started giving out precise instructions, I’ve become a lot less specific in my directions after a while.

In a non-AI-enabled approach, I would have to

Search for the best language/framework/libraries combo

Setup my machine if I’m missing tools

Go through the getting started documentation and run the commands

Look for ways to tailor the solution to my standards

Make sure it runs

At most, in 1h of touch time, my value-added time is around 2 minutes, as most frameworks give you command lines to copy-paste in your terminal to set things up.

But with AI, the result looks completely different because my process is the following:

Write out my specs:

I want to setup a blog. Give me the commands and code to achieve this:

Use NextJS

Articles written in markdown files

Possibility to create custom components in React render part of the articles

Uses TS, Eslint, Prettier

Wait for it to produce the response

Copy-paste terminal commands

Copy-paste file contents

Review

Fix/improve

So while the value-added time doesn’t change, in this case, the ratio goes from 3% to 13% as less time is spent getting to the commands to run.

One caveat to this approach is that it relies on known libraries and frameworks. This means that it’s impacted by ChatGPT4’s knowledge cutoff and may require a lot of specifications to achieve what I consider to be a good result.

The bad:

In my case, I had to specify NextJS as it kept recommending create-react-app without.

I had to clean up some of the base configs it provided

The good:

I get the commands immediately

It recommended using .mdx files (markdown with react) and used a library to get them to work with Next, which saved me time.

So a lousy prompt can quickly turn this time-saving process into extra cost. When using this approach, I try to stay with the most common tools (in this case, React, Next, …) to achieve the best results.

Writing API-heavy code

The notion of API here refers to the API of SDKs, libraries, or frameworks. Not only HTTP requests.

One of my top uses of ChatGPT4 is to achieve HTML DOM manipulation. It works well because the API has been stable for a while, and it’s probably been trained on many examples. So I can give out a task such as:

Give me a function to watch for every button element that’s right after a textarea element and change the text content to “PUSH THIS!”.

The code usually works instantly, and it also works with much more complex examples. A task that has often cost me a lot of time with constant interruptions to dig through MDN’s docs is now almost instantaneous.

Unfortunately, without plugins or using an agent to fetch extra context, it’s impossible to achieve the same result with up-to-date libraries that it hasn’t been trained on. In this case, I’m back to Googling.

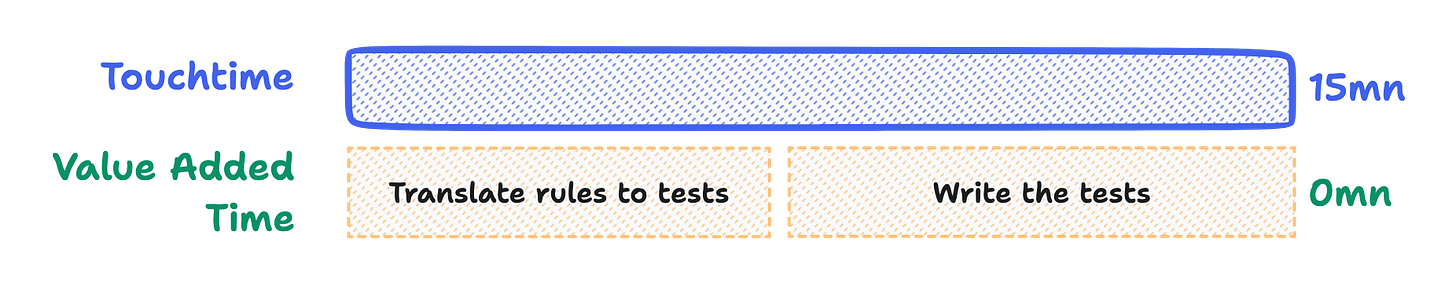

Writing tests

I’ll write on the principle that unit tests, while considered waste, will lead to reducing it later on.

AI may be the answer to spreading TDD (more on this in a later post). Writing tests often feels like a drag and requires a lot of practice to get efficient with. For complicated business rules, translating them to tests can also be time-consuming.

This is where ChatGPT4 helps me. For example:

In TS, with jest, give me a set of tests to comply with the following rules for "getTermStartDate"

The input is a date and a term duration in months (1,3,6 or 12)

It should always return the start of the current period based on the input date

The first term of the year always starts at january 1st

I know it’s pretty simple, so here is how it fared.

The good:

My rules were turned into a good set of tests to check my function

It covers every case I could have thought of

The bad:

Not every test is meaningful, and some are repetitive. In this case, it focused too much on the “January 1st” rule and gave me 2 tests to check it. While not very problematic, it can raise the waste of maintaining those tests later.

Overall, it’s an excellent starting point and provides a working first draft of the required specs, all with business rules which could have been written mainly by a non-technical person.

Once again, this is a considerable reduction in waste time, primarily due to the translation to code ChatGPT operated.

Implementing the function to pass those tests

This is where most of the value-added time can be found

Whenever I give it the tests, ChatGPT4 is very efficient in implementing the function itself. Some back-and-forth may be required by giving it the test runner error output, but I’ve achieved good results.

In a case where the context is still small and previous messages are included, I usually just write:

Implement the getTermStartDate method to pass those tests using luxon

Here, I didn’t have anything to write. The function was provided just along the test cases. Value-added time is reduced to (almost) 0, along with the touch time, and my effectiveness ratio goes to nearly 100%. It’s clearly a win.

However, there’s a significant limitation in realizing this objective. Optimizing ChatGPT -4’s ability to generate code requires careful planning and breaking down extensive features into smaller, more manageable functions.

Fortunately, if you remember “Research and planning”, it can also help you with that.

Debugging

I haven’t been able to achieve complex debugging with ChatGPT-4 because of a few reasons.

First, debugging is mostly about dichotomy and requires opening and going through multiple files to determine where the issue is. Thus, debugging involves a lot of context, most of the time above GPT4’s capacity.

Second, it requires back and forth between the code and your API/Website/Logs/… to retrigger and read the error. It should be possible with some advanced AI integration (see Langchain agents), but GPT4 alone can’t do so.

So no net gain on this side, although it once provided with helpful guess on where to look within a bugged small function.

Upgrading an existing feature

This is an area where ChatGPT4 both shines and lacks. So let me throw a quick summary:

I use it for:

Explaining a new language: With it, I was able to understand and write Python code in a matter of minutes. A language I have never used before.

Explaining small functions: When checking out the jumbled-up code of another GPT Chrome extension (I don’t get how they can move around in their codebase), it gave me a good summary of a function with way too many responsibilities

Loads conversation from nodes.

Retrieves user data (name, avatar) and settings.

Reconstructs conversation by traversing nodes.

Renders messages for user and assistant.

Inserts rendered messages into DOM.

Sets up event listeners and updates UI elements.

Refactoring: By telling it to adhere to DRY, SOLID, DDD, TDD, and as many acronyms as I could think of, it gave me basic refactoring steps, which I can ask an implementation for later on

Extract helper functions for better readability and separation of concerns.

Utilize async/await with Promisified chrome.storage API.

Replace

forloop withArray.prototype.map().Simplify DOM manipulation with a more straightforward approach.

Create a separate function to set up event listeners and update UI elements.

Updating small independent functions: I’ve successfully got GPT4 to update functions under ~40 lines.

I don’t use it for:

Tasks expanding across many functions: too much context is required to be effective. And the answer is often cut off by the max response size.

Updating big functions: Same reason. I try to ask for a refactor first (or do it myself). Otherwise, the response is cut off.

Updating functions dependent on others: Sometimes work, but the other function names must be very explicit. And the chat won’t know about available functions which aren’t in its context.

The context of working on an existing feature varies too much for me to do any accurate measurement. But all in all, it’s still a net gain. Either I reduce my lead time, or it remains the same.

Where ChatGPT4 hasn’t helped me yet

Please keep in mind that while it’s been a very effective tool for increasing my productivity, there are a couple of areas where I’ve been unable to use AI:

Up-to-date APIs: When developing my Chrome extension, it primarily recommends the deprecated v2 Chrome API. So I now have to rely on the doc. This might be fixed by ingesting the documentation first and providing it as context.

UI: I haven’t managed to get it to produce a good complex layout which is not a default “getting started layout”. Also, every time I’ve tried, there’s been too much back and forth, maxing out the context. I guess that low-code/no-code platforms are the best equipped to deal with this.

Summing it up

Considering everything I’ve talked about, in almost every situation, GPT4 increased my productivity. I got me to:

Reduce my lead time

Reach the first value-added time task faster (the fun part)

Raise my value-added time/touch time ratio

Get to production more quickly (my guess is that it would have taken me 3x more to release my Chrome extension without it)

What’s remarkable is that this isn’t just a tool replacing another with its typical trade-offs. It’s just a pure boost that speeds up all my coding activities. So if you’re not already a user, I advise you to at least try it out and make your own opinion.

That was a long read. If you’ve reached this point, thanks a lot for reading through 😉.

As always, if you found this helpful, it would significantly help me if you could share this post.

In my next one, I’ll talk a bit more about my AI-enabled development environment. See you next time…